In the first blog, we talked about accelerating military symbology development across the Luciad Portfolio.

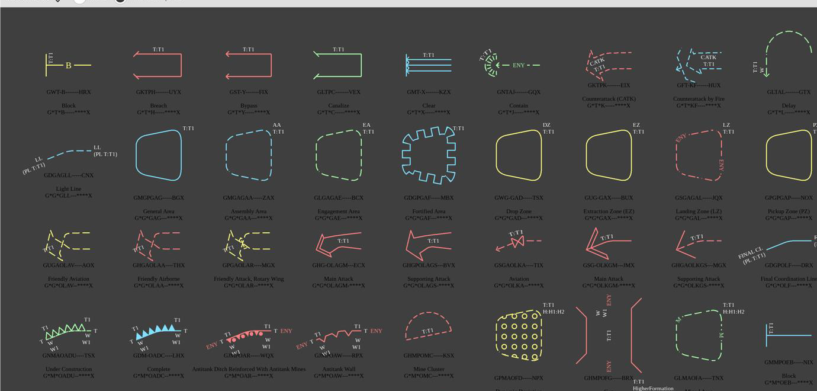

The outcome of this is that we now support the visualization and interactive editing of 10,000 military symbols across six visualization technologies1 in four products.

On top of that, we maintain several versions of every product, so we can easily fix or improve releases that have been around for years.

Let’s take a look at how we ensure and retain consistency, now and in the future.

Automated Testing

Automated tests are important to any product, but for military symbology it’s essential.

You can’t manually test 10,000 symbols and cross-reference them with the specifications every time you make a software change. On top of that, you want to test visualization on different operating systems and hardware/software configurations.

Luckily, the Luciad product portfolio is backed by a capable CI infrastructure that runs hundreds of thousands of tests every day.

Still, we had to overcome several challenges, the biggest of which was making robust and reliable visualization tests.

A handful of changed pixels can indicate a serious bug: a missing label or stroke problem. At the same time, we don’t want to deal with minor changes caused by running the test on a different JDK, machine, or operating system.

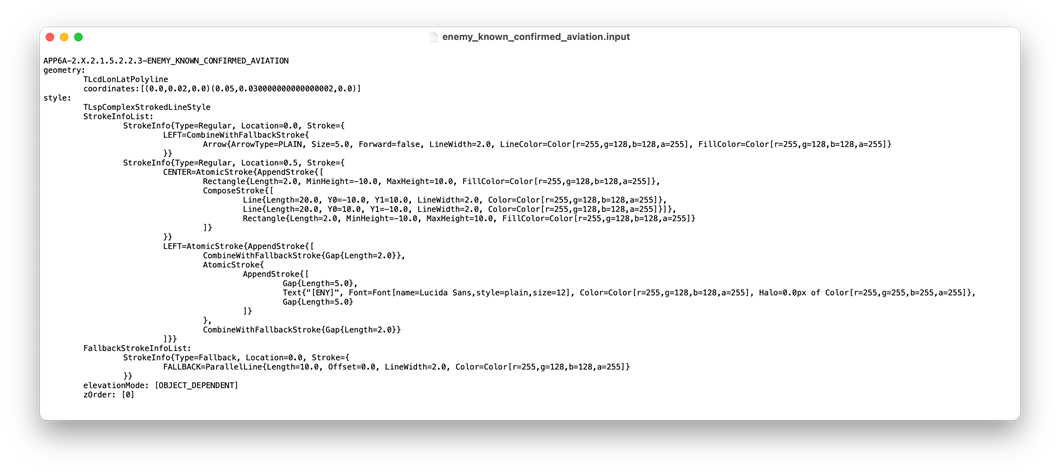

Therefore, we chose to use the visualization input as a reference, not the visualization output. This means we put our trust in the fundamental visualization components of our API.

We already have pixel-perfect tests in place for rendering complex icons, shapes, and text, so the symbology tests focused on how a specific symbol maps to primitive drawing commands.

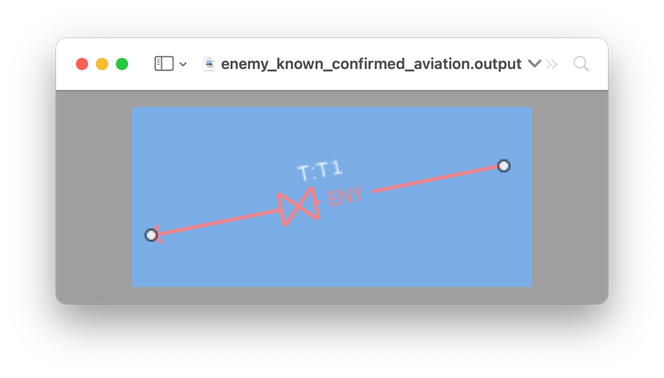

The visualization input for an APP-6A symbol.

The visualization output of the same symbol.

Benefits of using the visualization input as a reference:

– Tests are very stable, even across multiple software and hardware configurations.

– If there’s a failure, we can quickly identify the source of the problem because we know the changes of both the input and output.

– The visualization gives us a deeper insight into how the symbol works without having to start a debugger.

It doesn’t end here, though: we have similar tests for labeling and editing.

The second challenge was to act efficiently on test failures. Even though the tests were stable, minor changes can happen that affect many tests.

To avoid the Broken Window Syndrome2, we had to act fast on any test failures to keep the build green3 at all times.

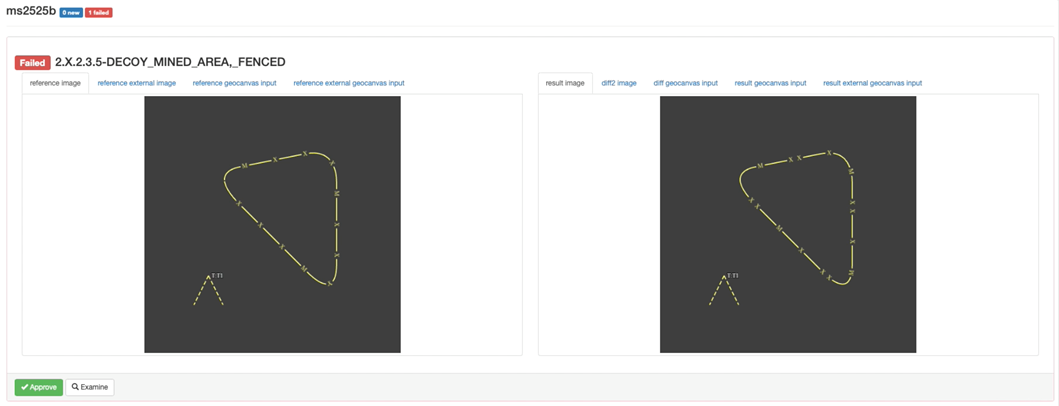

To do this, we developed a smart screenshot test verifier application for our R&D department. The verifier application integrates directly into our unit test results page so we can examine and approve failures without having to worry about the location of the test results. You can see it in action below.

Handling a visualization regression.

The failure shows that we just introduced a bug that collapsed one of the line segments. Didn’t quite catch that in the result image on the right side? Not to worry, a differential image is available to compare and pinpoint all the pixels that are different from the reference image.

As you can see, approval is as easy as clicking a button.

Cross-Product Maintenance

You might have noticed that the screenshot failure above shows some other tabs. That’s because we also include external references such as screenshots from other products and/or the actual specification. This makes things much easier for people that aren’t domain experts. They can now make an informed decision about whether or not a screenshot failure constitutes an actual regression and pinpoint the products and technologies that have to be patched.

The step is some automated consistency checks in case someone makes changes in one product and forgets to apply those changes to the other products.

But we don’t rely solely on tests. We also rely on people. A military symbology developer for LuciadRIA is often a backup for military symbology in other products. That means they get included in code reviews and consulted during development. They even get to participate in customer support so they can get to know who they’re developing for and see things from a customer’s perspective.

A Commitment to You

In the Luciad portfolio, military symbology support is one of the oldest components, but it doesn’t show its age.

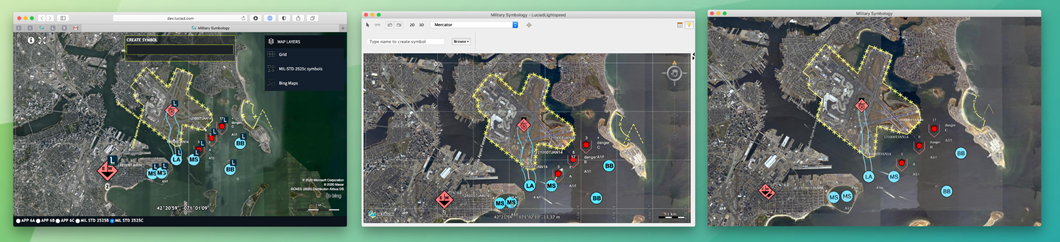

Throughout the years, military symbology support has modernized and adapted to become mature, efficient, and consistent, whether on. This makes the Luciad product portfolio an ideal candidate for cross-platform scenarios, where you create a tactical situation on one device and then push it onto another platform in the field.

To illustrate that the symbology looks the same across all the platforms, we’ve even aligned our sample code to be consistent across the platforms.

Military symbology samples throughout the Luciad product portfolio.

We look forward to continuing to evolve the Luciad product portfolio to meet the latest standards and, just as important, to meet and exceed your expectations.

That’s our commitment to you.

Want to try the Luciad Portfolio’s military symbology capabilities for yourself? See our interactive demo on the Luciad Developer Platform.

—–

1. LuciadLightspeed Software rendering

LuciadLightspeed Hardware accelerated rendering

LuciadRIA Software rendering

LuciadRIA Hardware accelerated rendering

LuciadMobile

LuciadCPillar

2. The broken windows theory is a criminological theory that states that minor signs of disorder encourages further crime and disorder, including serious crimes. For automated testing, it means that one failed test can quickly lead to a situation where the number of failed tests is out of control..

3. A green build is an automated build of a software application where all tests succeed. It greatly improves confidence in the product.